> 69 raise AnalysisException(s.split(': ', 1), stackTrace)ħ0 if s.startswith('.catalyst.analysis'):ħ1 raise AnalysisException(s.There are a couple of different things important. > 1160 answer, self.gateway_client, self.target_id, self.name)Ħ8 if s.startswith('.AnalysisException: '): usr/hdp/current/spark2-client/python/lib/py4j-0.10.6-src.zip/py4j/java_gateway.py in _call_(self, *args)ġ158 answer = self.gateway_nd_command(command) usr/hdp/current/spark2-client/python/pyspark/sql/dataframe.py in select(self, *cols)ġ200 \ģ join(df_ord_item,df_ord.ord_id = df_ord_item.ord_item_ord_id).show() \Ģ where("ord_status in ('COMPLETE','CLOSED')"). +- Relation csvĪt .$AnalysisErrorAt.failAnalysis(package.scala:42)Īt .$$anonfun$checkAnalysis$1$$anonfun$apply$2.applyOrElse(CheckAnalysis.scala:88)Īt .$$anonfun$checkAnalysis$1$$anonfun$apply$2.applyOrElse(CheckAnalysis.scala:85)Īt .$$anonfun$transformUp$1.apply(TreeNode.scala:289)Īt .$.withOrigin(TreeNode.scala:70)Īt .(TreeNode.scala:288)Īt .$$anonfun$transformExpressionsUp$1.apply(QueryPlan.scala:95)Īt .$$anonfun$1.apply(QueryPlan.scala:107)Īt .$1(QueryPlan.scala:106)Īt .$apache$spark$sql$catalyst$plans$QueryPlan$$recursiveTransform$1(QueryPlan.scala:118)Īt .$$anonfun$org$apache$spark$sql$catalyst$plans$QueryPlan$$recursiveTransform$1$1.apply(QueryPlan.scala:122)Īt $$anonfun$map$1.apply(TraversableLike.scala:234)Īt $class.foreach(ResizableArray.scala:59)Īt .foreach(ArrayBuffer.scala:48)Īt $class.map(TraversableLike.scala:234)Īt (Traversable.scala:104)Īt .$apache$spark$sql$catalyst$plans$QueryPlan$$recursiveTransform$1(QueryPlan.scala:122)Īt .$$anonfun$2.apply(QueryPlan.scala:127)Īt .(TreeNode.scala:187)Īt .(QueryPlan.scala:127)Īt .(QueryPlan.scala:95)Īt .$$anonfun$checkAnalysis$1.apply(CheckAnalysis.scala:85)Īt .$$anonfun$checkAnalysis$1.apply(CheckAnalysis.scala:80)Īt .(TreeNode.scala:127)Īt .$class.checkAnalysis(CheckAnalysis.scala:80)Īt .(Analyzer.scala:92)Īt .(Analyzer.scala:105)Īt .$lzycompute(QueryExecution.scala:57)Īt .(QueryExecution.scala:55)Īt .(QueryExecution.scala:47)Īt .Dataset$.ofRows(Dataset.scala:74)Īt .$apache$spark$sql$Dataset$$withPlan(Dataset.scala:3295)Īt .lect(Dataset.scala:1307)Īt (Unknown Source)Īt (DelegatingMethodAccessorImpl.java:43)Īt .invoke(Method.java:498)Īt (MethodInvoker.java:244)Īt (ReflectionEngine.java:357)Īt (AbstractCommand.java:132)Īt (CallCommand.java:79)Īt py4j.Gatewa圜n(Gatewa圜onnection.java:214)ĭuring handling of the above exception, another exception occurred:ĪnalysisException Traceback (most recent call last) : .AnalysisException: cannot resolve '`sub_tot`' given input columns: Py4JJavaError: An error occurred while calling o79.select.

usr/hdp/current/spark2-client/python/lib/py4j-0.10.6-src.zip/py4j/protocol.py in get_return_value(answer, gateway_client, target_id, name)ģ19 "An error occurred while calling. usr/hdp/current/spark2-client/python/pyspark/sql/utils.py in deco(*a, **kw)Ħ4 except 4JJavaError as e: Py4JJavaError Traceback (most recent call last)

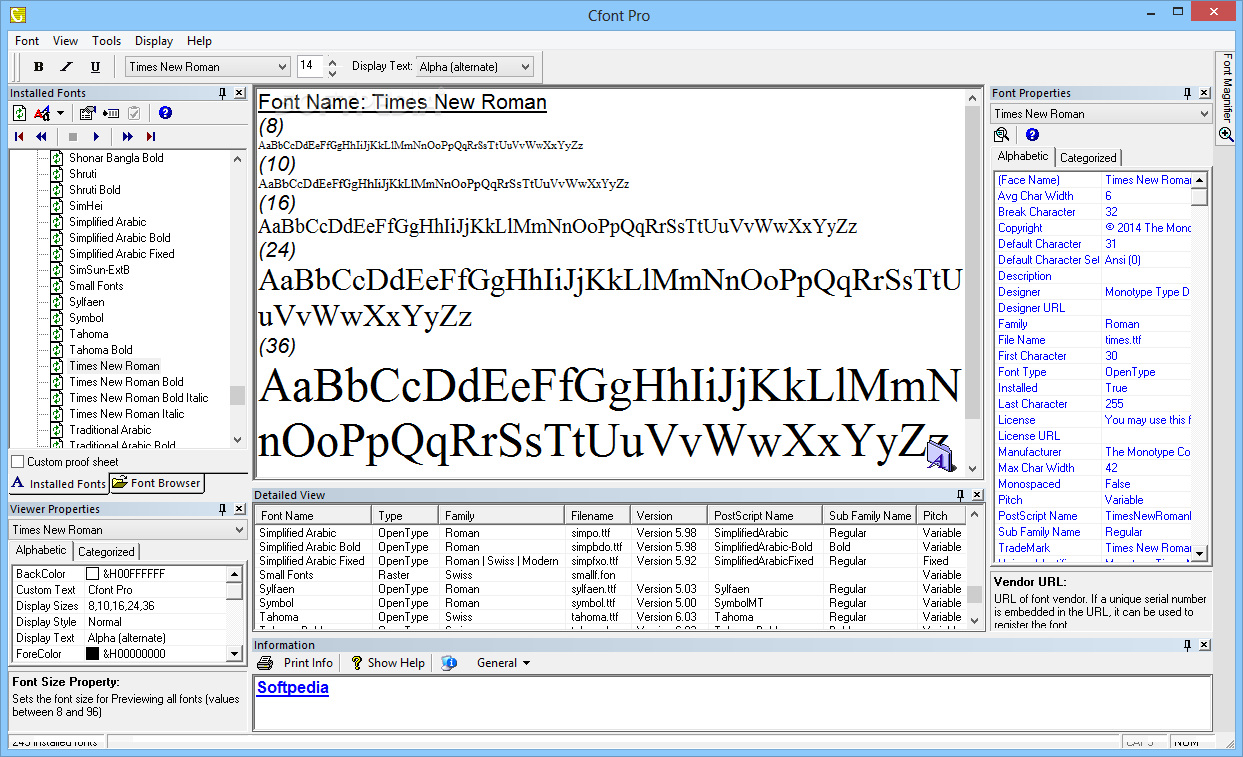

Please refer the attached screenshot.Īlso when I select multiple column from both Dataframe I got an error. If I select one column from df_ord DataFrame, result shows one column from df_ord and renaming columns of df_od_item data from which is incorrect.

I am not able to select desired columns using Select in Dataframe.